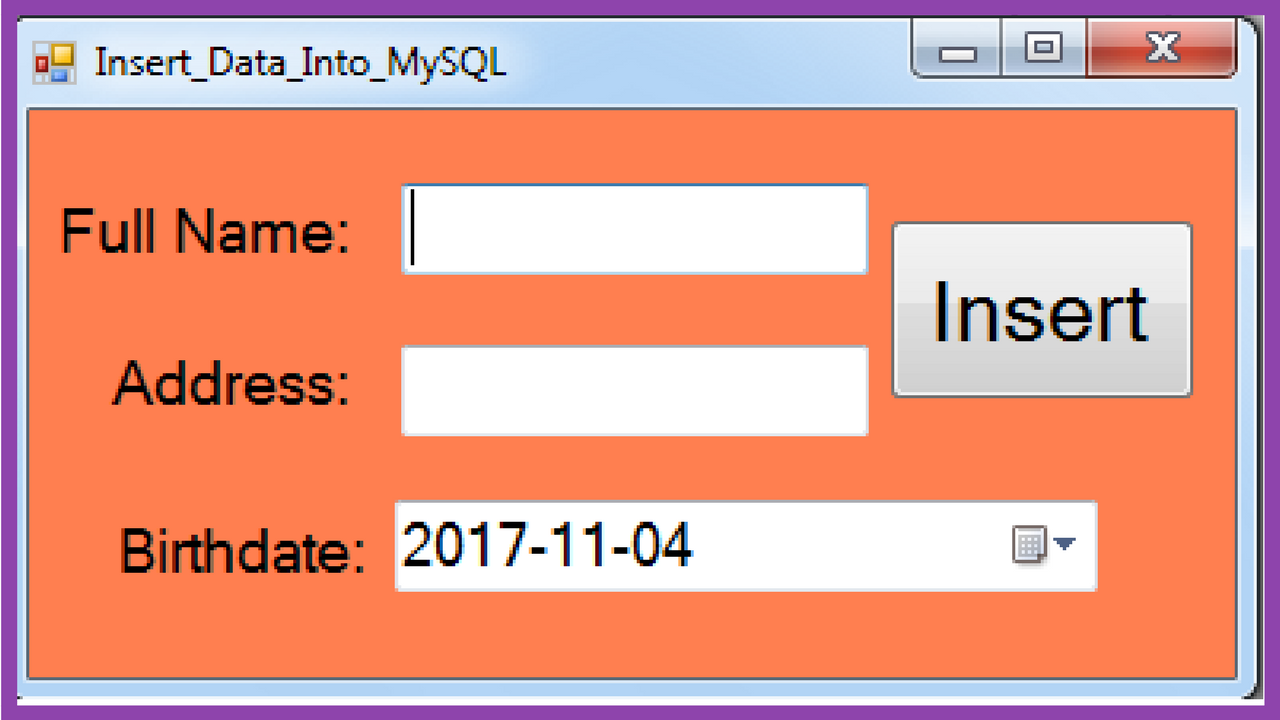

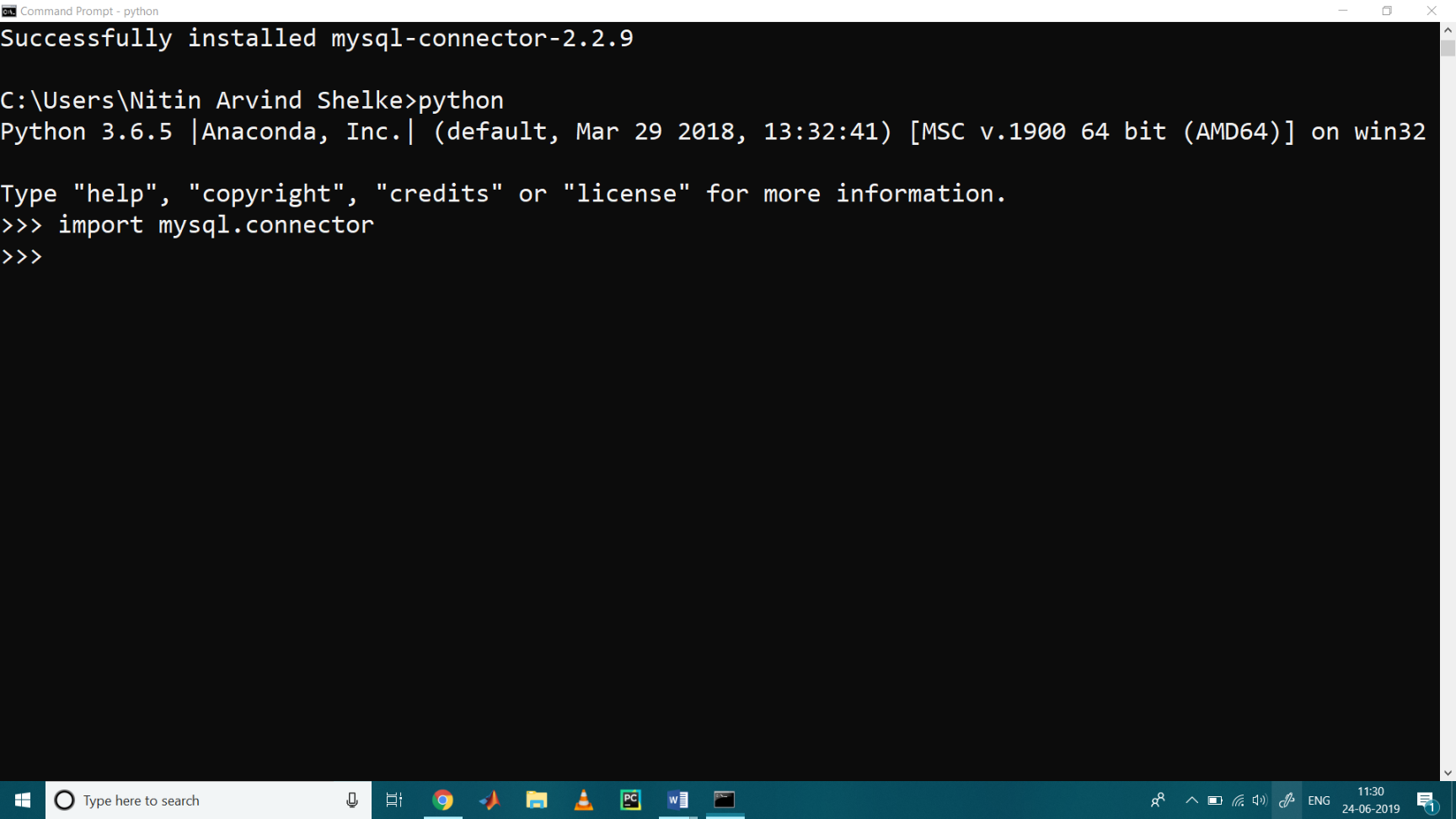

Then create a connection string of create engine through SQL alchemy.Äata_frame = data. Use 'pip install sqlalchemy' & 'pip install mysqlclient' in the command terminal. The data in the database will be inserted in text format so connect to database workbench and change the data types and the data is ready to use. In this, you need to specify the name of the table, column names. I'm using SQL alchemy library to speed up bulk insert from a CSV file to MySql database through a python script. You can add new rows to an existing table of MySQL using the INSERT INTO statement. It takes a list of tuples containing the data as a second parameter and the query as. Here are some examples timings running locally against a local MySQL server.Īs you can see, the naive implementation is ~100 times slower than odo. To insert multiple rows into the table, the executemany() method is used. Last is the naive method, committing one row at a time Next is using a raw cursor but inserting rows in bulk Insert a record in the 'customers' table: import nnector. Next is Pandas (critical code paths are optimized) To fill a table in MySQL, use the 'INSERT INTO' statement. The odo method is the fastest (uses MySQL LOAD DATA INFILE under the hood) Write a Python code to configure the MySQL Connector in your system and Insert data to MySQL Table after which you Fetch and Display data from Table Open the. Print("Count for table%s - %s" % (i, count)) Query = 'INSERT INTO (id, col1, col2, col3, col4) VALUES(%s, %s, %s, %s, %s)'Ä®ngine.execute("DROP TABLE IF EXISTS table%s" % i)Ĭount = pd.read_sql('SELECT COUNT(*) as c FROM table%s' % i, con=uri) You can do mysql MySQLUtil () After this, you need to make a connection to the database and then store the JSON data into a variable, and simply use MySQLstrong text.execSql () to insert the data. ), columns=)Äf.to_csv('tmp.csv', using_pandas(table_name, uri):Äf.to_sql(table_name, con=uri, if_exists='append', using_odo(table_name, uri): In order to store your JSON data into MySQL in python, you can to create a MySQLUtil so that you can insert your JSON data in MySQL. import pymysql Connect to the database connection nnect (host'localhost', user'', password'', db'') create cursor cursorconnection.cursor () Insert DataFrame recrds one by one. Suppose your SQL querys result set row s contain 4 values each, you just need to give these fields names and create a dict to insert into your NoSQL databse.Here are 4 approaches, including the naive implementation (above) #!/usr/bin/env pythonĬol1=np.random.choice(, n),Ĭol2=np.random.randint(-1000000, 1000000, n),Ĭol3=np.random.randint(-9000000, 9000000, n), Read the csv data row by row or all together and insert into the table. There are a number of ways to speed this up ranging from great to not so great.  The code you are using is ultra inefficient for a number of reasons as you are committing each of your data one row at a time (which would be what you want for a transactional DB or process) but not for a one-off dump.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed